We’re Waking Up Demons

Recently I was talking about how modern transformer models are basically universe simulators (don’t worry about missing it at the time, nobody reads these things) and this week openai released their universe simulation platform (masquerading as a “text to video” experiment).

let’s talk about it.

Demons Awake

if you wish to make an apple pie from scratch / you must first invent the universe

We’ve had GAN-ified “this person does not exist” capabilities for 8-10 years now. We can even run them locally these days.

There is something much more disturbing about creating videos of people who do not exist.

Especially when these people look directly into the camera and interact with the viewer.

There’s something more un-souling when seeing statistically generated people in motion with attempted export-only emotion — how dare you smile and wave at us you do not exist! Also we must ignore how she’s more like a metallic T1000 with how she instantiates a metal spoon out of her own flesh effortlessly.

The problem is: there is no there there. We’re presented a ghoul. The smile of a sociopath. You could imagine her saying hello just as easily as you could imagine this false person using her magic finger-spoon to scoop your eyes out while laughing at you. Computational zero-predictable demons. There is no continuity of experience. There is no purpose or intent or character-consistent knowledge to express or send or receive. Without being able to create and persist the equivalent of “life experience” for altpeople it just becomes a tool for laundering manipulative ideas through false idols.

What Have We Done

the blind will see / the deaf will hear / the dead will live again

Scenarios to consider:

- people who want to ignore a failed relationship and just continue simulating it forever

- implementation of false trigger orders: “this is my last message. future messages are compromised. accept no further communications. begin the attack now.”

- combine real time generation with everybody’s favorite computer hat for instant dystopia-to-go

- re-simulating departed friends or relatives

- simulating famous people for interactive parasocial relationships

- simulating your enemies for fun and profit

- we already have problems with people generating “fake executive headshots” for thousands of fake website scams, but now imagine you run entire autonomous fake companies with fake interactive people consistent across time backed both by nothing and by everything all at once.

We already have endless auto-generated people problems during the downfall of twitter. After all the high value brand advertisers stopped paying twitter, the ad buy rates became so cheap now every sleazy “grindcore” dropshipping “entrepreneur” is creating hundreds of fake person profiles to run ads for basic aliexpress or temu 30¢ items they try to resell for $30. Combine all the ad-scam fake profiles with the 2016-era hundreds of russian puppet/bot accounts where “ROY FROM KANSAS” has a 3 month old account with 800,000 “followers” all part of the same follow-circle-botnet where every account writes 1 politically aggressive post every 30 seconds for 15 months without ever sleeping — and they have hundreds if not thousands of accounts doing the same thing (twitter famously said they “can’t take action” against clearly fraudulent political manipulation accounts because the republican outrage machine attacks them when “ROY FROM KANSAS” who is very serious about “warm water ports” (and constantly advocating for american civil war) gets restricted because MUH FREE SPEECH GUT VUHLATEHD — also pairs nicely with the endless number of accounts constantly replying to anything remotely current event related (only during russian daytime hours of course) with interjecting “I AM FORMER LIFE LONG DEMOCRAT AND HERE IS WHY I WILL NEVER VOTE DEMOCRAT AGAIN!!!!!”)

A Model is A Model is a Model is a Model is a

we have failed the future inhabitants of the wounded world

Here we have an auto-generated video showing a recording of somebody generating art inside the auto-generated video.

Take the idea a step further: a video of somebody going to the zoo for a day then writing about their experience as the camera watches the paper (or screen) as they write about their day — so we have a generated output generating content inside the generated simulation.

Take the idea a couple steps further: a video of somebody writing their own prompts, which the outer simulation substrate consumes by reading the output from the in-simulation characters, then continues modifying their simulation as they wish. Now maybe imagine the platform is running as the computational backend of a dyson swarm and could generate trillions of entities while needing to remain self-consistent. Not impossible given scale.

maybe just keep it real just a little while longer at least as long as we can

Defense

What is the best defense against being AI replicated against your will? You need to protect against three main components of replication: your appearance, your voice, your personality.

Well, just make sure there are no pictures of you anywhere and make sure there are no videos of you anywhere and make sure nobody ever has a recording of your voice.

This also means never appearing outside in a city or residential area where a private or government surveillance camera could see you. This also means never appearing outside where a high resolution drone or helicopter or circling plane or spy satellites can see you. This also means never speaking outside in range of microphones attached to any of those devices. This also means never placing or receiving phone calls over legacy phone networks.

This also means you should never write anything down, never participate in online chats in public or in private for personal or work use, never send emails to any of the big email providers, never use social networking platforms, and don’t let anybody else mention you or your actions or words in any of those contexts either.

simple, no?

The only true defense is to be continually malleable and unpredicable so the hypertrained what-comes-next AI systems can’t predict your next monkey.

More thought experiments:

- imagine training a world model on two decades of every phone call made across the entire planet

- during their first investor call of the year, facebook made a point to mention they also have a private collection of 20 years of text and photo and video data for two billion people they can use for their own AI training anytime they want

- imagine having access to every email ever written over the past 20 years to also train your models (hello gmail)

On the other side, people in general are low dimensional entities with known response profiles. heck, you can simulate an entire dating site chat app by just having characters say “wyd” and “nm” a million times in a row and it would be indistinguishable from 99% of humans using the apps.

Conclusion

A scam going around recently is some spammer will call you, and if you answer with a regular “hello?” the scammers will record your voice and hang up. Then, using the sprawling trove of leaked data from company security breaches or just online searches, the scammers call your family or friends or coworkers using your voice as “proof” they have kidnapped you and they demand payment.

Takeaway 1: never answer the phone with “hello” anymore I guess?

Takeaway 2: now assume the same scam (hello all of india and russia and china i guess? shouldn’t we just cut off the internet from places where we know there are buildings full of thousands of people whose only purpose is “exploit the west using personal financial scams over the internet?”) is using your online photos and videos to generate 99.9999999% indistinguishable from reality video simulations of you being trapped or tortured to “motivate” the victims into immediate financially damaging action more readily.

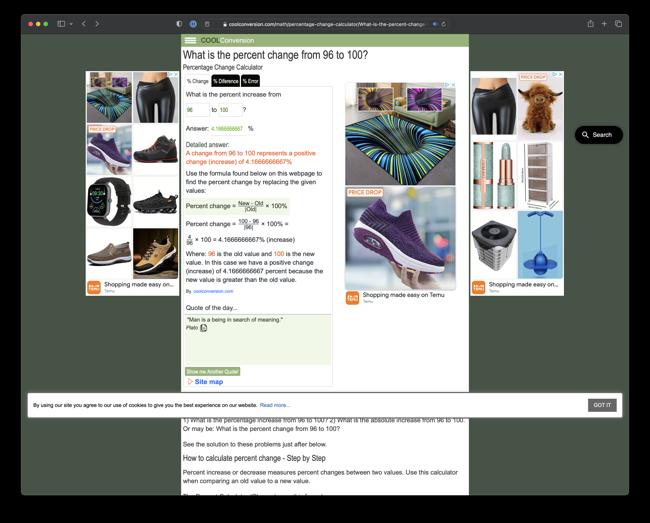

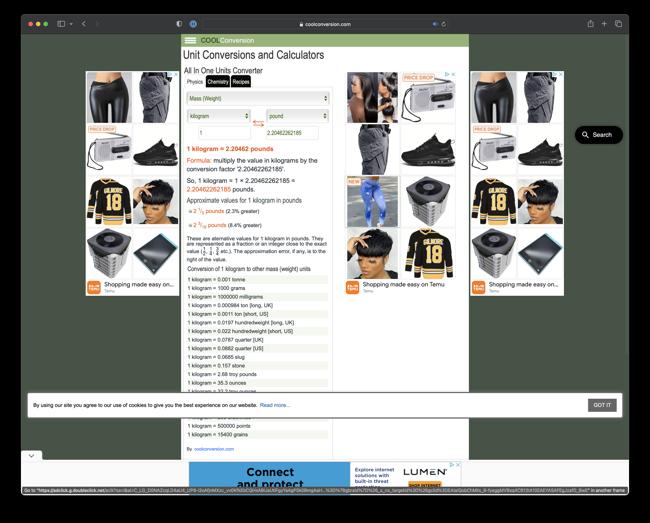

We can’t stop the math. We can’t stop the signal. We can’t stop the compute because quantized models work fine too. We can’t make people better. We can’t contain the scammers and computational criminals. We can’t even stop google continuing to ruin the Internet by up-ranking every spam site having 75 google ad units per page full of 20,000 content scraped and auto-generated content pages now masquerading as a “personal blog” (“personal blog” of someone who apparently writes 20,000 articles across 300 different topic areas and there are thousands of people doing the same thing with the same site template and same ad unit layouts… or it’s another “ruin the entire corpus of search results just to make a passive $3/day from scam ads”). Do we just return to the trees?

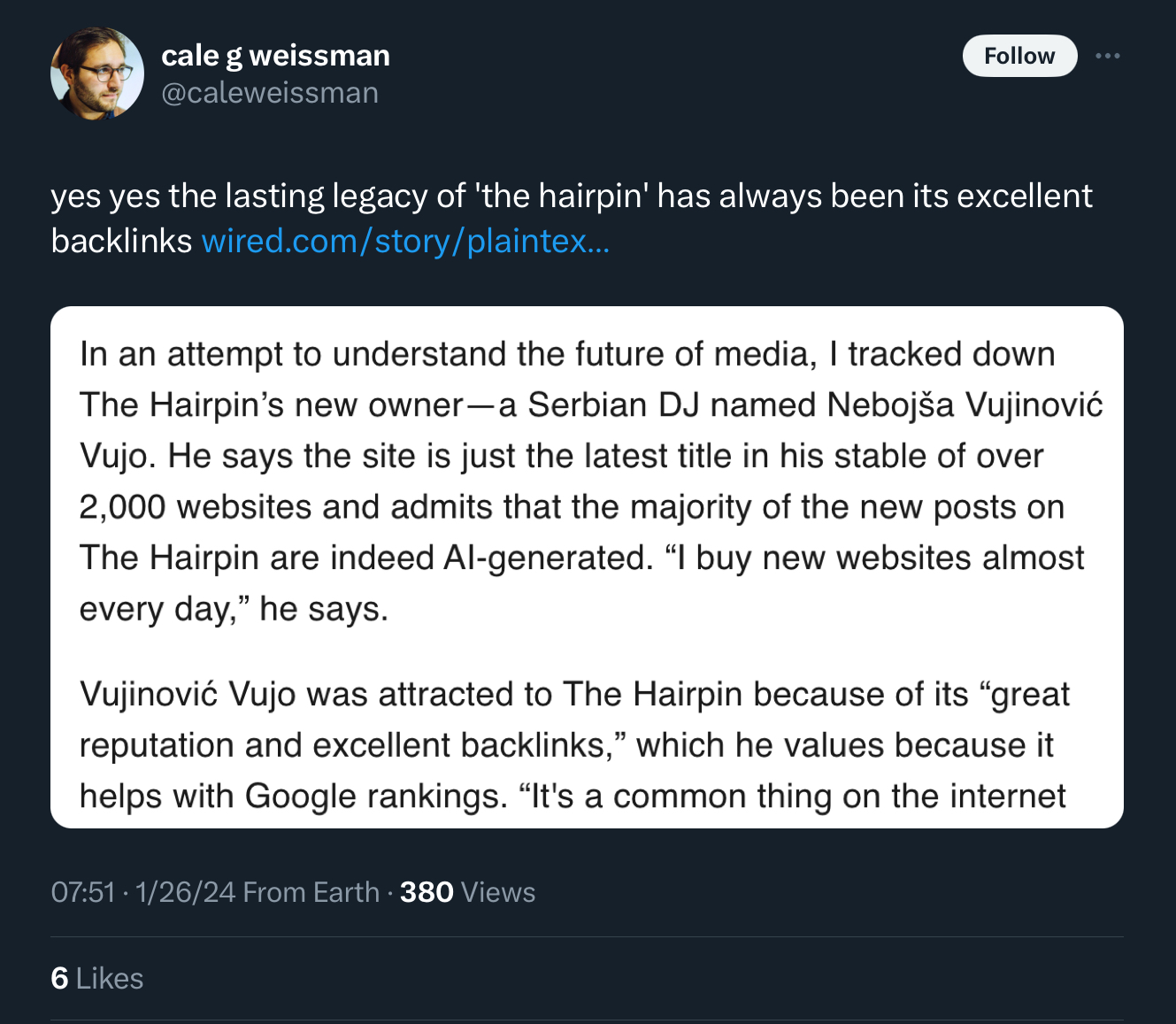

Scam Gallery

Maybe we should also disconnect Serbia from the Internet if it’s a source of people exploiting the world for low effort AI-based crapification at scale? The cross-country economic inequality makes it worth while for “low effort low tech business bros” to destroy the entire Internet with no remorse or conscience just to make $3/day per site from 100,000,000 hijacked sites showing infinite low quality google ad units.

Return to the trees.